Development Articles

Angular and Python Are Marketing Wrong

by Stephen Fluin 2015.05.16Over the last several years, developers around the world have been gently nudged to updated the version of Python they are using, and Angular is poised to do the same.

A new version, a new direction

Python 2.7.9 is the default used in Ubuntu's latest version. This is somewhat discordant with the fact that the latest version is actually 3.4.3. With Python 3, the creators of Python were trying to improve the language dramatically by refocusing on what was important for them in a language. The problem with this approach is that they broke backward compatibility, and left Python 2.x in a state where it was easier for developers to keep using the older version than to update to 3.x Python 3 was introduced in 2008! That was 7 years ago and developers still are reluctant to switch.

Angular is headed in the same direction, with their current work on Angular 2. Angular 2 (just like Python) breaks compatibility with the old version, introduces new language constructs, and tries to achieve a set of admirable goals including performance and simplicity. The problem is that they are trying to introduce too many things at the same time. To migrate from AngularJS (Angular 1) to Angular 2, the user has to learn and implement a number of changes to both the language they use to write applications, as well as to the toolset they use to build and deploy their applications.

The problem with Python 3

I may just be a terrible developer, but from my perspective the problem with Python 3 is that they got rid of all of the convenience tools included in the the language. Everything from the print statement to the way that you interact with files was dramatically changed to handle Unicode and edge cases better, but from my perspective this made the new language better at edge cases, and worse at nominal cases.

The problem with Angular 2

Angular 2 is headed down a similar path, with developers already groaning about having to do things a different way. While I believe each of the changes in Angular 2 were made for good reason, it's too much at the same time for the average developer to understand.

Take a look at all of the new things a developer has to do or think about

- Switching from Javascript to ES6 OR Typescript with compilation - Even if you don't want to switch, Angular 2 was written in Typescript, and most of the examples that exist today are Typescript examples. If you ask the average developer what the diffrences between Javascript, ES6, and Typescript are, they would have a lot of trouble explaining it.

- Shift to Components - Now instead of grouping controllers and views into their respective structures, everything is broken into components, where the view and corresponding controller live together. This a structurally benficial change, but it once again assumes you are building a complex application, and increases the work to simply get started.

- Loss of Directives - Directives have disappeared and been replaced by Components and Directives (which is a type of component). This confusing terminology

- Loss of Existing Libraries - Because of the change in formats for Components and the loss of Directives, the huge number of AngularJS modules available on the internet are made useless for these types of projects.

- Double Binding Magic is gone - There's a magic moment when a developer connects an ng-model to a variable in the view. With the new Component model, this magic is gone because it feels like you have to create the wiring yourself, and your application will require more code just to get started (although in fewer places).

The Solution

From my perspective, the solution is simple. Eliminate the frustration and fears related to the migration path by designing, building, and marketing these "major revisions" as new languages.

Developers are used to and often excited by the adoption of a new language of framework. When a major revision with breaking changes is created, developers have to conflate their positive feelings about the past framework with the additional time, energy, and cost related with updating their knowledge and skillsets, without the corresponding buzz coming from the adoption of something new and exciting.

Perhaps if we had Cobra and Nentr (names I have made up for Python 3 and Angular 2 respectively), developers would be able to evaluate these languages on their own merits, rather than being forced into a "upgrade or be left behind" mentality.

permalink

Create Your Own Mobile App Privacy Policy

by Stephen Fluin 2014.06.08One of the necessary evils of the world is the use of a Privacy Policy when you develop a mobile application. You probably aren't going to hit everything if you write your own. There are a huge number of paid services out there, but the complexity and lack of transparency from those services can be very frustrating.

After some searching, I found a service that provides free access to a template that can act as the basis for your own privacy policy. It even guides and leads you through the process of modifying and customizing the policy to your needs.

Check it out here: http://www.docracy.com/6513/mobile-privacy-policy-geolocated-apps-

permalink

Chrome Cordova Apps - cca create bug

by Stephen Fluin 2014.05.30Failed to fetch package information for org.apache.cordova.keyboard

If you have experienced this error, you aren't alone. Any projects being created with version v0.0.11 of Chrome Cordova Apps (cca) are now failing to be setup properly.

The change comes from a desynchronization between Cordova's plugin library and the cca tool. This has been fixed by the developers in the latest source code on Github, but not released as part of npm.

The easiest way to get around this bug is to edit

, and change line 53 from:

to

After that, cca should work just fine again, allowing you to create new projects.

permalink

A Year of Android Studio

by Stephen Fluin 2014.04.30 It has almost been a year since Google IO 2013, where Google Announced a new IDE for Android, called Android Studio. Android Studio has been a major problem for developers and development companies since this date due to the schism between Eclipse and Android Studio.

It has almost been a year since Google IO 2013, where Google Announced a new IDE for Android, called Android Studio. Android Studio has been a major problem for developers and development companies since this date due to the schism between Eclipse and Android Studio.

Developers have to decide whether they build applications using Eclipse and Ant using the stable toolchain and tools they have been using for years, or whether to adopt the less stable and constantly changing Android Studio. What complicates this matter is that new Android capabilities and support and documentation is coming to Android Studio faster than Eclipse.

Grade at the Heart

Whether JetBrains or Eclipse have figured out the "best" way to build an IDE doesn't really matter to me as a developer. What matters most is that my toolset gets out of the way of me writing great applications.

This may be my naivete or lack of skills, but I find Gradle to be a terrible build system. It's not terrible from a feature set, or from it's logical architecture or flexible structure. It's terrible because it gets in my way. With Eclipse and Ant, the Android build system is exposed to me as a single file configuring the use of proguard. This file is optional. To get any Android application to work, I just need to tell Eclipse that it's an Android app, and it will handle the build process. My code will work if I create a new project, or if I move my code into someone else's system.

With Gradle, this process is bad. When I check out code from the internet and try to import it into my project, it fails. If I am missing one of the five gradle files that have meaningful configured content that I must protect, nothing is going to work on my application. Why is this build system trying to hard to get in my way?

Example Gradle Failure - ChromeCast Video for Android

Code sharing should be easy. To help developers learn to build applications for ChromeCast, they have created and released a starter project called CastVideos-android. This project is available publicly on Github here:https://github.com/googlecast/CastVideos-android

When I clone this project and attempt to import it into Android Studio, everything seems to go wrong. The first time I attempted to open it, it opened the /gradle/ folder, rather than the project file, meanining there was no source code. The second time I tried, I noticed that it wanted me to find the gradle.settings or build.gradle files, and I did that, but then my project wouldn't run because Error, configuration with name 'default' was not found. How am I supposed to fix that? Your default configuration is missing? Gradle is too complicated, and its use and existing right next to working code means it gets in the way of development.

SEPARATE BUILD AND DEVELOPMENT PROCESSES. I understand they are connected and should be as smooth as possible, but look at Yeoman and Grunt, these tools do the same thing Android Studio is trying to do with Grunt, but they do them much better.

Summary

Gradle may be great for huge teams or multi-year development projects, but I would argue that most of Android development doesn't happen this way. Android is most commonly used by small 1-5 person teams that need to write code quickly and get it out to users.

permalink

Back in 2011 I predicted the combination of the Chrome Web Store with the Android Google Play store. While my prediction has not yet come true, we are closer today than ever before.

A couple months ago, Google began releasing a tool called cca (originally short for Chrome Cordova Apps, but I suspect they will try to shift the name to "Chrome Mobile Apps" for branding purposes). This tool allows you to take an application that you have developped for Chrome (Called a Chrome App because it has specific restrictions and implementation details), and run them on Android and iOS.

This means that by building a single application, you can run it now on Windows, Mac, Linux, Android, and iOS. This comes with downsides, the lack of the Action Bar and other native UI/UX patterns, but with a strong design sense, these challenges can be overcome to build high quality cross-platform applications.

Pros

- Single Codebase

- Supported by Google (implying an ever-increasing level of support)

- Unified Payment Mechanisms (between Androido and Chrome)

- HTML5 -> HTML / CSS / JS stack development

Cons

- Limited by lack of native UI / UX

- Dependency on Chrome APIs

- CSP Complications - Chrome Apps run without the ability to inject remote javascript or images, this can cause some issues if you are used to building pure HTML5 applications.

- Poor HTML5 rendering engine on iOS.

Getting Started

To get started, download node.js. Node.js comes with the Node Package Manager (npm).

With node and npm installed, you will need to download the cca toolset with npm install -g cca

Now that you have cca installed, you will need to connect it to your Android and Java paths. cca will have instructions inline.

Build your Chrome App

cca will help you get started with cca create <app name>. After running this command, you will get a folder that contains a lot of platform and cordova code, and a special folder called www. The www folder is where all of the code for your app will live.

Open the www folder, throw some code into the index.html file, and then within Chrome go to "Load Unpacked Extension" and find the www folder. Your app should run in the browser. You can then go back to your command line at the root folder for your app, and tell cca to cca run android

permalink

Photospheres Begin to Penetrate the Internet

by Stephen Fluin 2013.05.03A recent improvement to the Google Plus javascript widget and API allows us to convert static photosphere images into interactive widgets, composing full rotational views of the world.

See more information and learn how to create your own On Google Developer's Panorama Website

permalink

Start Coding in 2013

by Stephen Fluin 2013.01.03The skills involved with software development don't have to be black magic. There are several great services that have launched in the past year to help individuals start to work with code, and to work on their programming skills. Learning to program is like learning a spoken language in that doing so has a lot of secondary benefits beyond the direct skill. Programmers have a well developed ability to create mental models for new ideas. They are able to adapt to change more quickly, and can develop a consistent logical framework for thinking about the world or a specific situation.

Codeacademy

Codeacademy.com has one of the best training programs anywhere for people who want to learn coding. In my experience, tools like this are in many ways superior to formal training or education. While formal training or schooling can give you a lot of the theory, background, and context, services like Codeacademy focus the user on writing code and solving problems. This real-world style experience where you aren't answering questions, but actually writing programs that are being run is a great way to experience not only the fundamentals, but also the power of a programming language.

When I first learned programming, whenever I encountered a new language's Hello World, I always got a smug feeling of satisfaction from the fact that instead of printing "Hello World", I would write a personalized message like "Bonjour Monde" or "Sup world?". This isn't a major feature that people typically discuss, but the ability for learners to deviate from the course in ways that they find interesting is critical in my opinion to the development of these types of creative skills.

Codeacademy has a decent variety of modern languages. Their most developed content is around javascript, which runs natively in your browser, and Python, which they have hooked up to a server based interpreter. Each course will take you through the entire process of developing an application, from flow control, to variables and math, to functions, classes and higher level concepts. The repetition of syntax can be helpful for experienced programmers learning a new language, but building yet another factorial method can be a little frustrating.

permalink

Google Needs to Move Faster With Android

by Stephen Fluin 2012.11.29Google needs to move faster with Android and adopt a fixed release cycle. The recent launch of Android 4.2 caused several issues for Nexus device users. Everything from experiencing new sources of lag and stutter in the user experience, to completely losing December in the contact application. Some may take this as an indication that Google needs to move slower with their Android releases and perform additional testing stagegates. My feeling is that this is the exact opposite of what they should do. Google, and modern software development in general, benefits from going faster.

What I mean by going faster is building more frequent rolling updates to the platform that do not require user intervention. Chrome for Windows/Linux/Mac is the gold standard representing this ideal. Chrome development happens in the open, meaning all source code for the application is developped using open source licensing, and is immediately availlable after developers commit it to the Chromium project. The Chrome team also has a fixed release cycle, meaning that every 6 weeks all of the users of the software will receive an update. Users can additionally opt in to what they call the Beta or Developer channel to get early access to features, in exchange for giving up stability.

If Android moved to a fixed release cycle, it would have many benefits. First of all, Partners such as Carriers, Manufacturers would be able to develop standardized processes around the adoption of Android. More frequent, smaller updates to the Android platform would force Google to improve their update capabilities. Right now non-Google Android devices are lucky if they are upgraded for a full year after launch. If these devices were build and planned with the expectation of a 6 week release cycle, Google would be forced to improve the robustness, speed, and quality of the Android update mechanism. Making this process transparent and irrelevant to users would be a huge win for the Android platform in general and would continue to encourage user adoption.

permalink

Make your website more SPDY

by Stephen Fluin 2012.04.18Google's relentless quest to improve performance for all things technologic continues. They have just hit a huge success with SPDY, their replacement for HTTP for web transactions. Now, with a few simple linux commands, you can download, install, and activate an Apache module that will speed up everything for all of your supporting Chrome and Firefox users. This is a great thing to do because it is one half of the SPDY adoption question, and will help move the entire web forward into a faster, newer generation. Fortunately there is no downside. Users not yet capable of SPDY will imply failover to the slower HTTP transaction.

Install SPDY on Apache by doing the following (assuming a 64 bit system):

That's it, you are done. You will now see Chrome users negotiating with SPDY. YOu can verify this by visiting Chrome Network Internals

permalink

HexSLayer Release Updates

by Stephen Fluin 2012.03.15HexSLayer recently launched a few new versions. In the Android Marketplace (Now known as Google Play), HexSLayer 1.0.19 was launched, and in the Amazon App Store, HexSLayer 1.0.20 was launched. These may be the last updates for a few weeks, as there has been a major update to the packing system I use, known has PyGame Subset for Andoir. Both of the published versions of HexSLayer use PyGame Subset for Android (PGSA) version 0.9.3. This has been a great framework for me, as I have been able to write applications and games in Python, and then launch them simultaneously on Windows, Mac, Linux, and Android.

With the latest update of PGSA to version 0.9.4, there has been a change to how the system packages applications. Before, the system would copy over the raw Python source files (or .py files), but now the tool packages only the optimized and compiled versions of Python applications (the .pyo file). This means that if you come from an earlier version on Android and upgrade, you will end up having both versions on your device.

The problem with this upgrade path is that it leaves both versions of the files on your device, and the Python subsystem by default runs the old version of the application. That means that there is new code on your device, your phone will always run the old code. This has been the core issue preventing me from releasing HexSLayer 1.1.x.

I wrote to the author of PyGame Subset for Android, and discovered that he had a fix prepared that will allow me to continue using the old packaging method, while waiting for my users to upgrade to a version compatible with the new method.

As soon as PGSA 0.9.5 is release, I should be able to repackage and release the next set of features and bugfixes for HexSLayer.

Thanks for playing!

permalink

The primary audience for this article is those who already have a working Android development environment, and are looking to get the latest and greatest from Google running in the emulator. Unfortunately, for me, the default emulator settings that ship with Ice Cream Sandwich (ICS) do not enable me to actually run an emulated instance of the application.

Fix the Ice Cream Sandwich Settings

To make everything work, I updated eclipse to the latest AVD and SDK versions using both the Eclipse updater, and the new SDK manager. Then I downloaded API Version 14 of the SDK, platform, etc. I opened the new AVD manager and created a new AVD targeting ICS.

The default properties of Max VM application heap size of 24 and Device ram size of 512 resulted in build errors for me. I increased these properties and the machine booted like a charm (albeit extremely slowly).

I increased the Max VM application heap size to 64 and the Device ram size to 1500. You may be able to get away with less, I haven't tried it. Make sure you have plenty of unused RAM before you attempt to increase the RAM available to the AVD.

That was it for me to get it working. Have fun and build some great applications!

permalink

Optimize PNG images with OptiPNG

by Stephen Fluin 2011.08.17PNG is a great free lossless, compressed image format. One thing that may surprise you is that PNGs can actually be further compressed, making the filesize smaller than a normal image application will typically achieve. The good news is there there is a tool called optipng that makes it easy to non-destructively improve the layout of PNG files.

On Ubuntu, install OptiPNG with sudo apt-get install optipng. Once it is installed, you can run it its default mode by typing optipng file.png. If you feel like giving the application lots of time to achieve aggressive compression, you can use the -o flag to indicate additional compression. Compared between the default and -o7, I have found only minimal additional compression that has almost never been worth the additional CPU time.

Below are the results of one such compression attempt:

permalink

In May at GoogleIO, Google announced that it would begin supporting multiple .APK files, and finally this feature has come to fruition. To get started using this new feature, visit the Android Developer Console and click on your application. At the top of the application information you will notice that the upload .apk feature is now gone. It has been moved to a new tab at the top of the window, "APK files".

Using the Multiple APK Tool

Each of the .apk files that you upload to the Android Developer Console are examined. The system looks at the MinSDK version and the Target SDK version. The new features allow publishers to maintain multiple .apk files that target different screen versions, different version numbers, and OpenGL support. This is simultaneously a boon, and a potential disaster. It should decrease the difficulty of maintaining code that targets and supports different feature sets, but at the same time it supports the further fragmentation and differentiation of different Android devices. In an ideal world, the platform would be standardized in such a way that multiple versions wouldn't be necessary. In the practical world, Google continues to update and improve the API and platform. They break backward compatibility, and come up with new and better ways of performing software development and achieve better user interactions. This means that multiple .apk files will end up supporting the practical fragmentation of the platform.

One of the best ways to leverage these new abilities will be to fork applications at major points in platform revisioning. For example, it will soon make sense to have 3 different APKs, depending on how much historic support is desired. The first group would be platform versions 1.5 - 2.1. The second group would be 2.2-3.2. The final group would be the new Ice Cream Sandwich 4.0 platform version.

permalink

Simple Android Tips from Google IO

by Stephen Fluin 2011.05.10

This morning I watched Google IO and learned several important details about android development that are not readily transparent as one begins developping Android applications. Fortunately, these items are extremely easy to address, and with a few minor changes, the usability and quality of your applications will drastically improve. I loved the presentations given during Google IO, and I could watch days of these sorts of tips, as Android development is a vast and expansive topic.

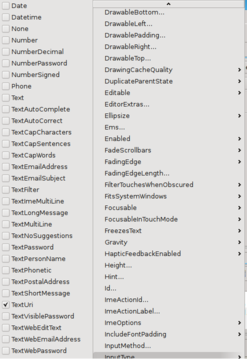

Tip 1: Specify TextType

This tip has to do with any EditText objects that you include in your application. When building android applications with text fields, you should inform the platform what the intent of the edit text is. You can do this in one of two ways. First is by editing the layout used by your application. Here is an example layout that includes an EditText that has been specified as a URL. This will ensure that the keyboard, whenever possible, shows the most appropriate keys for URL entry.

Use the code as follows:

The second menu is to set the setting via the UI.

Tip 2: Specify Audio Stream Control

This one is a quick fix. By default in Android the audio hard buttons control whatever audio is most foreground. This means that if you have audio playing, it will control that audio, otherwise it will default to the ringer. This one is easy to fix by calling the following code in your OnCreate:

Tip 3: Specify Target SDK Version

The final tip is something that I most likely missed as part of Android Development 101. By default, your application will not specify a minimum or target SDK. Not specifying a target SDK version means that the Android platform will assume your application uses the lowest SDK version, or uses the minimum SDK version that you specified. This is becoming more important starting with Honeycomb, because Honeycomb uses the Target SDK version for choosing some of the visual elements to show. The example that lead to my discovery of this was the menu icon. If you target the wrong SDK version, the menu icon will be shown, regardless of if you have a menu or not.

Fix it by specifying the correct versions. For me at the time of writing, they are as follows:

permalink

Web Development Tool: GZIP Detector

by Stephen Fluin 2011.01.04When developing web pages for mass consumption, one of the most important things you can do with your content is to make sure it is being transmitted in a compressed manner. Typically for web pages, the way to achieve this is to gzip your content. If your browser supports this, it will report its support to the browser as one of the headers when it requests a page. When the web server receives this, if it has the correct setup it will render the content, place it into memory, run a compression algorithm on it, and transmit it. On the client side your browser will then take the compressed stream, uncompress it, and render the page to the user. This may seem like a lot of steps, but in actuality, the slowest part of the request/transmit/rendering process for web pages is usually the internet transaction, and compression can speed things up many times.

When setting up this type of compression, or when testing the setup of an existing server, it can be very helpful to have a tool that will report whether a given URL is being sent gzipped or not. In order to address that, I have built a GZIP Detector. Simply give it a URL, and it will tell you whether or not that URL is being transmitted in a compressed format. There are many other ways to detect this, but they aren't always handy, or they require specific software or browsers.

Hopefully this GZIP Detector will provide value to someone besides me.

permalink

Session management in PHP is one of its greatest strengths. The only downside of its session management is in the expiration period for sessions. By default in Ubuntu the timeout is set to about 24 minutes, which is fine for most situations. When doing article editing or other things that can take more than 24 minutes, however, it's frustrating to submit a form and have a session expiry lose the data you have been working on.

One way to overcome the time limit is to have regular automatic saves done via AJAX, but another technique is to increase the session time. In order to change the session timeout, the change needs to be made in the PHP configuration. In some distributions, this file is stored in /etc/php.ini. In Ubuntu and some other distributions, this file is stored in /etc/php5/apache2/php.ini. This setting is controlled by session.gc_maxlifetime. What this setting does is to control the session cleanup. After making any changes to this setting in the php.ini, make sure you restart apache to ensure that the version of PHP being used by Apache is updated with your configuration changes.

In PHP, sessions are probabilistically erased at regular intervals after gc_maxlifetime. By changing this max lifetime, you increase the time for which the session will be valid. You could also make changes to the divisor or probability of cleanup, but I have found that these settings can simply be left to their original values without negatively affecting the functioning of the sessions.

permalink

Update for Reddit Imager

by Stephen Fluin 2010.12.23After having a few pending changes to the Chromium extension Reddit Imager for a few months, I finally got a chance to make some very minor but updates to this tool.

The simplest of the changes was adding a few extra strings to the matcher, so now imgur links using "gif" "png" or "jpeg" will match and show full size on Reddit.

The bigger, more important change was adding some naive support for imgur links that aren't direct links to images. Formerly, there were many links that were not supported by the tool. These were links to an "imgur page" that had advertisements and content, rather than just an image. Now, the reddit imager detects and relinks these so that the true image is shown full size, rather than just leaving the link alone.

permalink

Apache has a very decent authentication scheme. In Apache you define what are called "Authorization Realms". You can define these in your apache configuration files on a directory or site, or you can define them in a .htaccess file. Each of the Authorization Realm specifications will point to a password file and will look like this:

This file defines a few things, it defines which users to allow, where the passwords are stored, and the name of the Authorization Realm. The name of the authorization realm is important, because you can have multiple layers of authentication. For example, you could have a folder that any valid user can access, and a subfolder which only 2 specified valid users have access to. If you ensure that the AuthName is set the same, the user will only have to enter their username and password once, whereas if you have different AuthNames, the user will be required to pass into each realm separately, even if the password file is the same.

Important Security Note

Any time you use Authorization through Apache, you should ensure that your users are connecting with HTTPS, otherwise the passwords will be sent in plain text, and anyone listening in the middle could see and capture them.

permalink

How I Make Tough Website Decisions

by Stephen Fluin 2010.08.01I need to make some decisions soon about MortalPowers.com. This includes questions about the appearance, functionality, the focus of my time, and what sort of browsers and codecs I want to support. To answer these questions, I'm going to try to go back to the original question, which is asking myself what are my goals, and why am I building this site.

What is my goal with this website? My goal is to increase traffic, to provide a place for my development projects to live; grow; and be shared, and finally to meet the needs of my visitors.

The last goal is going to be one of the most central, because it will inform the first two. But to answer this question, I need to know as accurately as possible, who are my visitors? I'm going to use the last month as the window and breakdown my traffic from multiple source (Google Analytics and AWStats primarily).

Who visits my site?

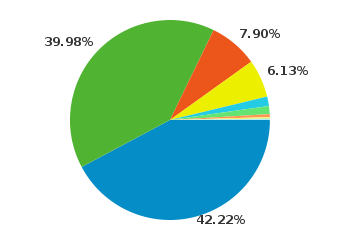

Browsers

Right out of the gate, 8% of my visitors will be unable to view any HTML5 videos. At this point, I'm comfortable instantly dropping the 8% of my visitors that still use Internet Explorer. This is partially a philosophical decision, but it's also in part because most of the people that come using that browser will have no interest in anything I have to say.

Right out of the gate, 8% of my visitors will be unable to view any HTML5 videos. At this point, I'm comfortable instantly dropping the 8% of my visitors that still use Internet Explorer. This is partially a philosophical decision, but it's also in part because most of the people that come using that browser will have no interest in anything I have to say.

Only about 3% of my Firefox users will be unable to view my Ogg Theora videos. This indicates that Firefox users tend to update their browser relatively frequently. This is great, because Firefox and Mozilla have committed to support to a lot of the technologies I want to use and support.

Only about 3% of my Firefox users will be unable to view my Ogg Theora videos. This indicates that Firefox users tend to update their browser relatively frequently. This is great, because Firefox and Mozilla have committed to support to a lot of the technologies I want to use and support.

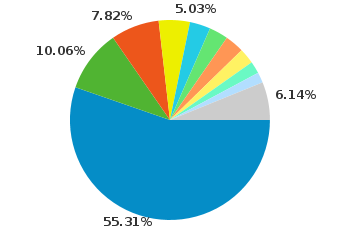

Operating Systems

It seems that a major plurality of my users (45.5%) still use windows to access my site. This is acceptable to me because Windows users can still be interested in Open Source, in software development, or even interested in trying or understanding Ubuntu. I will try to do what I can to support these individuals. As an interesting tidbit, Windows use ends up breaking down as follows for me: 43.9% use Windows 7, 39.22% use Windows XP, and 14.03% use Vista, the rest use Windows Server 2003.

It seems that a major plurality of my users (45.5%) still use windows to access my site. This is acceptable to me because Windows users can still be interested in Open Source, in software development, or even interested in trying or understanding Ubuntu. I will try to do what I can to support these individuals. As an interesting tidbit, Windows use ends up breaking down as follows for me: 43.9% use Windows 7, 39.22% use Windows XP, and 14.03% use Vista, the rest use Windows Server 2003.

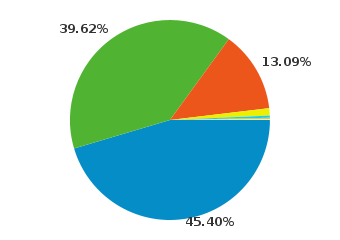

Screen Resolution

Less than 7% of my users have screens of 1024 width, everyone else has 1280 or much greater.

What this means for Video

One of the goals that has most recently bubbled to the surface for me is the idea of supporting my video gallery on mobile. I'm continually disappointed with YouTube's mobile site because their video collection is a subset of their full video gallery. Looking at the video support offered by the users of my site, I can cover almost all of my desktop users with Ogg Theora, and until WebM becomes a universal standard, this will be the universal standard. I'm not anticipating WebM to become available across my user base until at least 2012, but time will tell. On mobile, the only option is to use .h264. Based on these competing values, I'm going to attempt to have 2 encodings for each of my videos. I'm not sure yet whether this will mean I have a mobile version of the gallery, or whether I will try to use a single HTML5 video gallery with multiple sources, and I'm definitely open to feedback.

Over the next few months and years, I'm anticipating that mobile usage of my website will grow from the lowly 1% to 20%-50%. Google itself has declared a "mobile first" strategy, and the human workflow of using a phone seems to be much more natural than the bulky process of using a laptop or desktop computer, despite the speed, cost, and additional capabilities provided by desktop computers.

I hate the idea of supporting the .h264 standard, but until WebM is available, I'm going to have to make a decision in favor of my users, rather than in favor of my philosophy that Android and iPhone and Internet Explorer seem to have already rejected. It still makes no sense to me why the mobile phones and Internet Explorer don't at least offer Theora video decoding, since the software is free (as in speech) for them to implement.

permalink

Example Virtual Host Apache Configuration

by Stephen Fluin 2010.07.14Someone asked for an example of Apache Virtual Hosts configuration. All of my virtual hosts have the same format as the following two examples:

Types of Virtual Host Configurations

Name Based Virtual Hosts

There are two main types of virtual host configuration. The first called "Name Based" virtual hosting matches the examples above. In this case, the domain name provided as part of the request determines the site that apache matches. Name based hosting does not work with SSL because Apache doesn't know the domain name requested until AFTER the encryption negotiation is complete.

Address Based Virtual Hosts

The second type of virtual host is address based hosting. This will only be used when the server has many IPs. The server uses the interface (server ip or port) of the incoming request to determine the site to respond with. This is almost always used to establish SSL hosting in combination with name based hosting.

Example address based virtual hosts:

Dynamic Virtual Hosts

The third type (which is new to me) is mass or dynamically configured hosts. These hosts use the IP or Domain name to determine the DocumentRoot of the site. Read the Apache spec here. Example of what would go in your httpd.conf below:

The %0 when used with VirtualDocumentRoot replaces %0 with the domain name.

permalink